- Blog

- ChatGPT vs Perplexity for AI Visibility in 2026: Citations, Traffic, and Conversion Compared

ChatGPT vs Perplexity for AI Visibility in 2026: Citations, Traffic, and Conversion Compared

Across 680 million AI citations analyzed by Averi in early 2026, only 11% of domains were cited by both ChatGPT and Perplexity. Whitehat SEO’s independent study of 118,000 responses found the same number. Ahrefs’ query-level analysis put cross-platform overlap with Google’s top 10 at around 12%. Three different methodologies, one conclusion: the brand graph these two engines see is almost completely disjoint.

Then came February 2026. On the 9th, OpenAI launched ads inside ChatGPT for the first time. Eight days later, Perplexity confirmed it was abandoning advertising for good — publicly stating that paid placements would undermine the trust that drives Pro subscriptions. Two of the most prominent AI search products on the planet picked diametrically opposed business models in the same week.

If you’re a marketer, an SEO, or a founder trying to figure out where your brand should be visible, that combination matters. The two engines reward different sources, send different volumes of traffic, and convert that traffic at very different rates. You can win on one and be effectively invisible on the other. This post unpacks what the 2025–2026 data actually says about both — and what it means for how you should think about AI visibility.

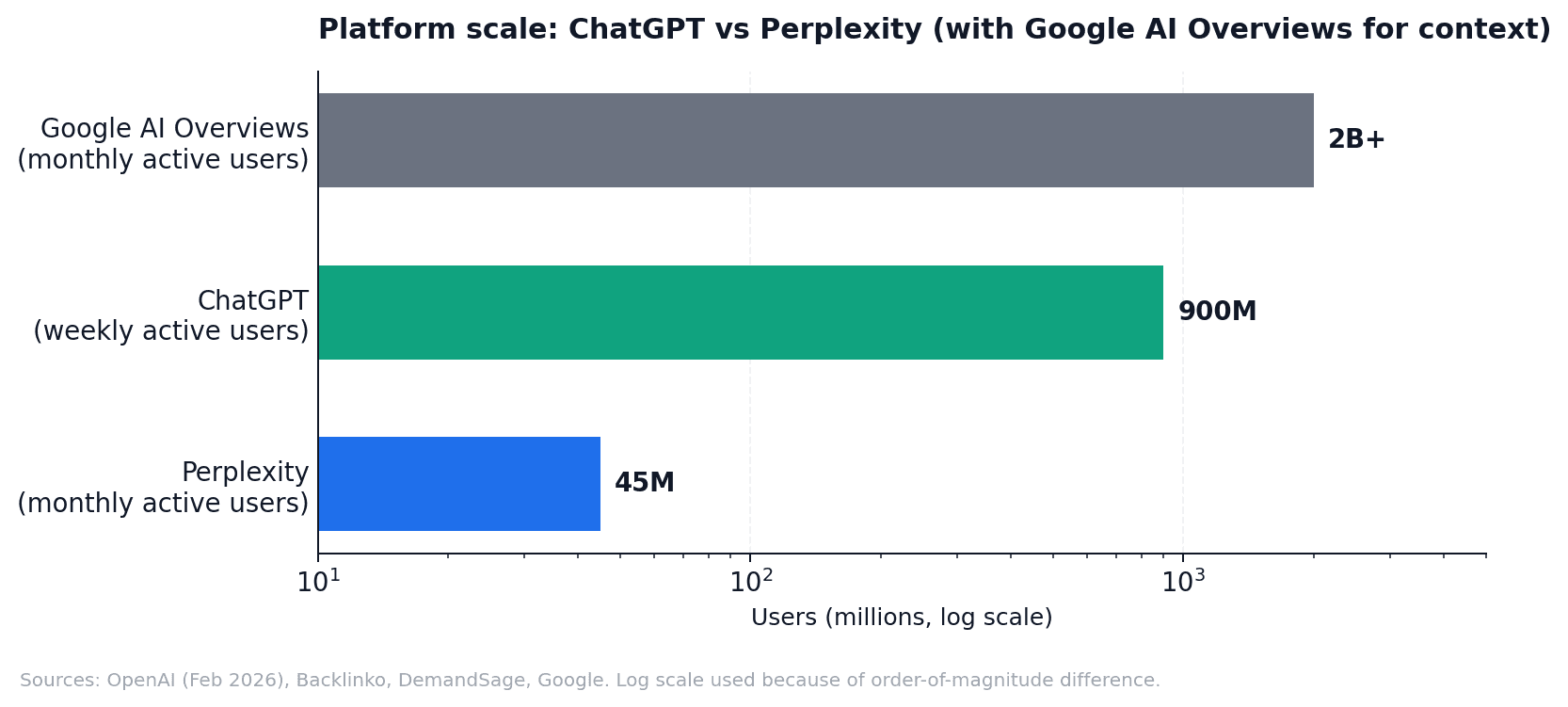

Scale and audience: 900M vs 45M, but it’s not that simple

ChatGPT reached 900 million weekly active users in February 2026 (OpenAI), processes roughly 2.5 billion prompts per day, and held about 64.6% of global generative AI website traffic in January 2026 (Similarweb). It is, by orders of magnitude, the dominant consumer AI product on the planet.

Perplexity is far smaller. Estimates of monthly active users in early 2026 range from 22 million to 45 million depending on the source (Backlinko, DemandSage, AI Business Weekly), with the company itself claiming higher figures to the Financial Times. Even at the upper end, that’s roughly one-twentieth the size of ChatGPT.

Chart 1. Sources: OpenAI (Feb 2026 announcement), Backlinko, DemandSage, Google. Note the log scale — the linear gap is much larger than it appears.

But raw user count is the wrong way to think about visibility, because audience composition matters more for high-consideration purchases than audience size. WARC’s late-2025 audit of Perplexity users found 80% are college graduates, 65% are high-income white-collar workers, and 30% hold senior leadership roles. Click-Vision and Similarweb data show 41% work in tech, finance, or knowledge industries. The average Perplexity session runs 11–12 minutes, and 80%+ of usage is on desktop — a remarkable inversion from early 2024, when most usage was mobile. People use Perplexity like a research workstation.

ChatGPT skews more mainstream. About 53–57% of users are under 35; the gender split has narrowed to roughly 52% women per OpenAI’s own community research; 73% of usage is non-work; and “asking” (information-seeking) accounts for 49% of all messages. ChatGPT is where the average consumer asks questions. Perplexity is where the senior decision-maker compares options. Both matter — they just matter for different things.

The citation chasm: how each platform actually picks sources

The single most important difference between ChatGPT and Perplexity isn’t their size or audience — it’s how they treat sources. ChatGPT mentions brands often but cites them sparingly. Perplexity does the opposite: it cites densely but commits to a short, high-authority shortlist. The mechanics are worth understanding in detail, because they dictate what content actually earns visibility on each.

ChatGPT: lots of mentions, fewer links, heavy directory reliance

BrightEdge’s AI Catalyst tracker found ChatGPT mentions brands 3.2 times more often than it cites them with links — about 2.37 mentions per response versus 0.73 actual citations. Yext’s study of 6.8 million citations found that 48.7% of ChatGPT citations come from third-party listings: Yelp, TripAdvisor, MapQuest, BBB, and industry-specific directories. For subjective “best of” queries, that share rises to 46.3%.

Wikipedia accounts for roughly 16.3% of ChatGPT’s top-cited domain share (Profound’s analysis of 680 million citations). News media — Reuters, Apple News, AP wire services — fills out the next tier. LinkedIn surged from the #11 most-cited source in November 2025 to roughly #5 by February 2026, and now appears in about 14.3% of ChatGPT responses (Semrush, 325,000 prompts). User-generated content — Reddit, Quora — dropped out of ChatGPT’s top 10 entirely after a series of platform updates in late 2025, falling to roughly 0.5% of citations (BrightEdge), although Tinuiti’s Q1 2026 data shows Reddit creeping back up. The citation graph is volatile.

Two findings from Ahrefs’ work matter most for marketers. First, 67% of the top 1,000 pages ChatGPT cites are off-limits to brand SEO — Wikipedia, government and educational institutions, Apple’s App Store, major news media, encyclopedic references. You can’t pitch your way into them. Second, 28% of ChatGPT’s most-cited pages have zero Google organic visibility. Eighty percent of ChatGPT-cited URLs don’t rank in Google’s top 100. The two visibility surfaces simply don’t correlate the way intuition suggests.

Perplexity: dense citations, high authority bias, brutal freshness

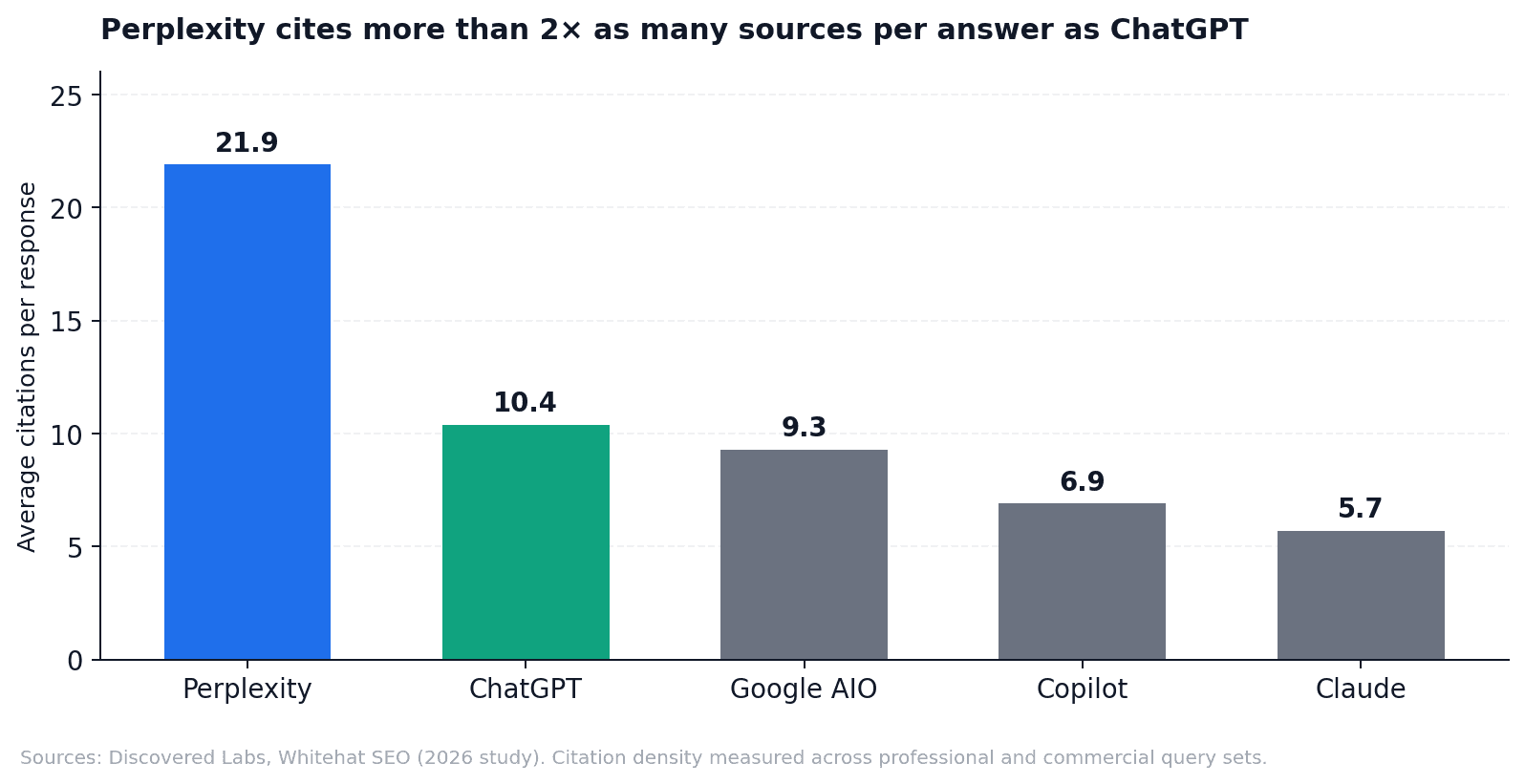

Perplexity averages 21.9 citations per response — more than double ChatGPT’s 10.4 (Discovered Labs, Whitehat SEO, 2026). It also generates roughly 20 times more website links than brand-name mentions, the inverse of ChatGPT’s pattern. Brands often get cited on Perplexity without being named in the answer text — a “ghost citation” dynamic where you drive traffic but lose mindshare.

Chart 2. Citation density across platforms. Perplexity is the outlier; ChatGPT and Google AIO occupy the middle; Claude and Copilot cite fewest sources per answer. Sources: Discovered Labs, Whitehat SEO.

Perplexity’s freshness sensitivity is also extreme. Whitehat SEO’s analysis showed an 82% citation rate for content updated within 30 days, falling to 37% for older content. The platform reads roughly ten pages per query but only cites three or four — it commits to a tight, authoritative shortlist. BrightEdge found 86% of Perplexity’s brand mentions land in position 5 or earlier in a list, versus the long, exhaustive shortlists ChatGPT tends to produce.

Where does Perplexity pull from? Reddit was its top single source through most of 2025 (6.6% of total citations, 46.7% of top-10 share according to Profound) — until Reddit sued Perplexity over scraping in October 2025, after which Perplexity’s Reddit citations dropped 86% and YouTube partially filled the gap (Conductor). YouTube now sits at roughly 16.1% of Perplexity’s top-10 share (Ahrefs). Perplexity also has the highest .edu share (3.2%) and the highest international country-code TLD share (4.4%) of any major engine, with strong concentration in institutional medical, government, and academic publishers.

Side by side

Citation behavior | ChatGPT | Perplexity |

|---|---|---|

Citations per response (avg.) | 10.4 | 21.9 |

Mention-to-citation ratio | 3.2× more mentions than citations | 20× more links than brand mentions |

Top single source | Wikipedia (~16% of top citations) | Reddit / YouTube (volatile post-Oct 2025) |

Third-party directory share | 48.7% (Yelp, TripAdvisor, MapQuest, BBB) | Lower; tilts to niche / vertical directories |

Off-limits-to-brands ceiling | 67% of top 1,000 cited pages | Lower — niche directories more pitchable |

Recency bias | Cites content ~458 days newer than Google |

Table 1. Sources: BrightEdge AI Catalyst, Yext (6.8M citation study), Profound (680M citations), Ahrefs Brand Radar, Discovered Labs, Whitehat SEO (2025–2026).

The 11% overlap, unpacked

Pull all of this together and the headline finding makes intuitive sense. ChatGPT trusts what the consumer internet has aggregated about you — directories, Wikipedia, news, LinkedIn, and a long tail of editorial sources. Perplexity trusts what high-authority specialist sources say about you, refreshed in the last few weeks. There’s only so much overlap between those two universes, and the data confirms it: roughly 11% of cited domains appear on both, depending on how you measure (Averi 680M citation study; Whitehat SEO 118K response analysis; Slate HQ 300K cross-platform sample). BrightEdge’s benchmark across ChatGPT, Google AI Mode, and AI Overviews found the engines disagreed on which brands to recommend 62% of the time.

Practically, this means rank tracking and brand visibility on one platform have very limited predictive power for the other. If you’ve been monitoring ChatGPT and assuming Perplexity will follow, you’ve been monitoring roughly 11% of the picture.

Traffic share vs. conversion: the inversion

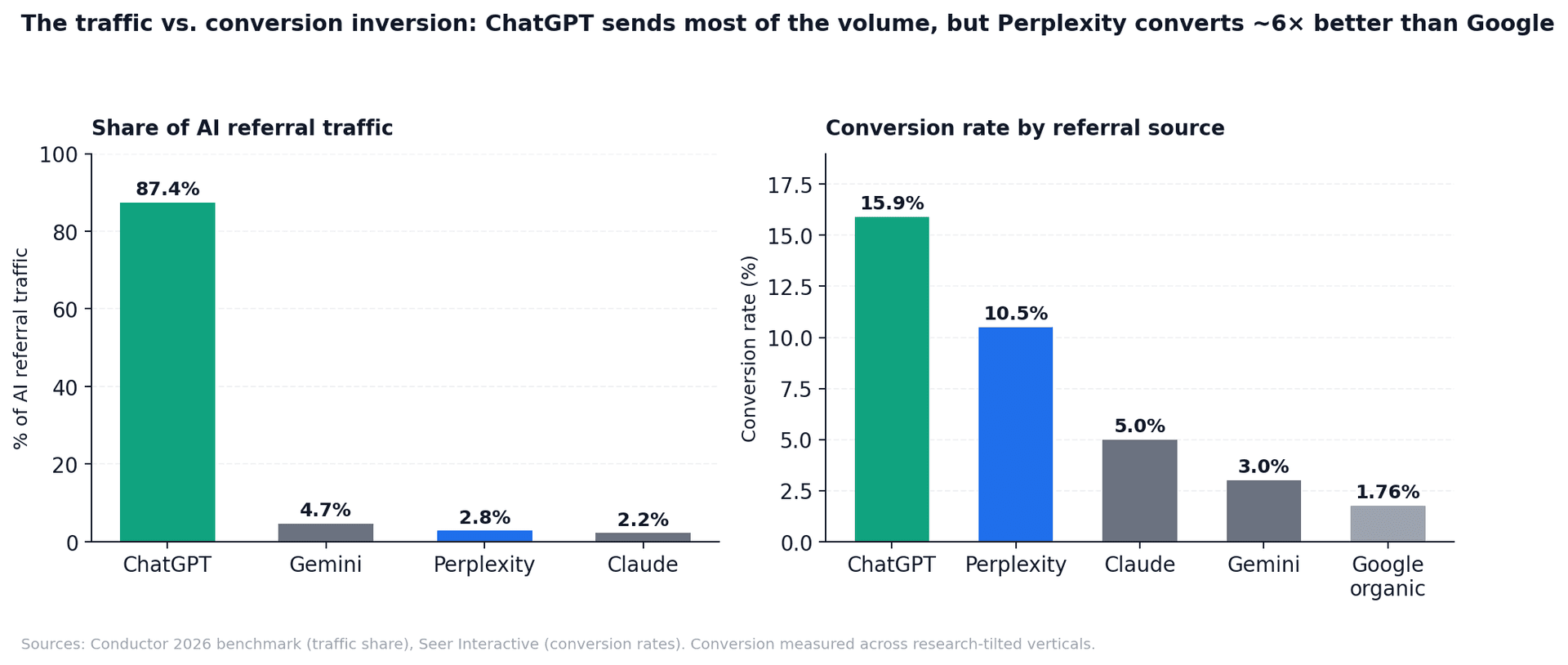

Citations matter because they translate into traffic and revenue — but the two engines deliver volume and value in completely opposite proportions.

ChatGPT generates roughly 87.4% of all AI referral traffic across industries, per Conductor’s 2026 benchmark (matched closely by Similarweb’s figure of around 77–85% across 2025). Perplexity drives about 2.8%. Gemini sits between at 4.7%. In absolute terms, AI platforms collectively generated 1.13 billion referral visits in June 2025 alone (Similarweb), up 357% year over year. Tiny vs. Google, but compounding fast.

Conversion flips the picture. Seer Interactive’s benchmark study — the most-cited dataset in this space — found ChatGPT-referred sessions converted at 15.9%, Perplexity at 10.5%, Claude at 5.0%, Gemini at 3.0%, and Google organic at 1.76%. Adobe’s holiday 2025 data corroborated the pattern: AI referrals converted 31% higher than other online sources on average and 54% higher on Thanksgiving Day. Semrush put LLM visitors at 4.4× the conversion rate of organic search.

Chart 3. ChatGPT dominates traffic share; Perplexity dominates conversion efficiency relative to Google. Sources: Conductor 2026 benchmark, Seer Interactive.

One honest caveat: a 12-month study by Schulze and Kaiser covering 973 websites and roughly $20 billion in revenue found that ChatGPT referrals actually underperformed Google organic on transactional purchases by about 13%. The reconciliation, per a Practical Ecommerce analysis, is that AI referrals over-perform in research-heavy verticals (B2B SaaS, professional services, finance, electronics) and under-perform in impulse categories (apparel, grocery). Adobe’s data fits this pattern — apparel and grocery were the weakest AI-conversion verticals; electronics and jewelry were the strongest. The headline is right: AI traffic converts well. The asterisk is that it converts especially well for the kind of considered, comparison-driven purchase that AI is good at researching in the first place.

Monetization is now diverging — which makes tracking both more important, not less

Until February 2026, both platforms looked broadly similar from a brand’s perspective: subscription-funded research tools where the only path to visibility was being cited. That changed in two announcements eight days apart.

On February 9, 2026, OpenAI launched ads inside ChatGPT for free and Go-tier US users — roughly $60 CPM, 600+ advertisers in the first six weeks, and $100 million in ad ARR within two months (TechCrunch, Axios). OpenAI told prospective IPO investors that this revenue line will scale to $2.5 billion in 2026 and $100 billion by 2030 (Axios, April 2026). Those out-year figures are projections shared with investors, not realized numbers, but they signal where ChatGPT’s monetization is heading.

On February 18, Perplexity confirmed to the Financial Times that it was abandoning its November 2024 ad pilot for good. A Perplexity executive framed it bluntly: “A user needs to believe this is the best possible answer… ads are misaligned with what users want.” Search Engine Land summarized the consequence: brands now have no way to get visibility inside Perplexity’s answers other than via organic citations.

Dimension | ChatGPT (OpenAI) | Perplexity |

|---|---|---|

Ads in answers | Live as of Feb 9, 2026 (~$60 CPM) | Abandoned Feb 18, 2026 |

Path to paid visibility | Sponsored placements available | None — earned citations only |

Commerce | Apps directory + Walmart Sparky integration; Instant Checkout deprioritized March 2026 | Buy with Pro, Snap to Shop, free Merchant Program |

Revenue model | Subscriptions + ads (~$2.5B projected ad revenue in 2026) | Subscriptions only ($500–656M ARR target for 2026) |

Implication for brands | Paid + organic strategy possible | Earned citations are the only lever |

Table 2. Sources: OpenAI announcements, Financial Times, Search Engine Land, TechCrunch, Axios, CNBC (Feb–April 2026).

The split has a counter-intuitive consequence. You might expect that with paid placements available on ChatGPT, brands could de-prioritize organic optimization there. The opposite is true: the moment ad inventory exists, organic visibility becomes more valuable, because you can no longer be sure whether a competitor’s mention is editorial or paid. And on Perplexity, where there are no ads at all, every citation is signal. Tracking both becomes more important after February 2026, not less.

What wins where: the per-platform playbook

Because the two engines reward such different signals, the optimization tactics that move the needle on each are quite distinct. The table below summarizes what the evidence supports.

Tactic | ChatGPT | Perplexity |

|---|---|---|

Third-party directory presence (Yelp, BBB, G2, Capterra, TripAdvisor) | High priority — drives 48.7% of citations | Medium — focus on niche / vertical directories |

Wikipedia / Wikidata entity strength | High priority — ~16% of top citations | Lower priority |

LinkedIn original posts | High priority — surged to #5 most-cited domain | Medium |

Domain authority / referring domains | Critical — 2× more important than for AI Mode | Important — but content quality outranks DA |

Content freshness cadence | Quarterly is sufficient | Monthly minimum on key pages |

Reddit / Quora mentions | Volume-based (millions of community mentions) |

Table 3. Sources: Yext, BrightEdge, Profound, SE Ranking, Ahrefs, Discovered Labs, Search Engine Land (2025–2026).

A note on llms.txt, since it keeps coming up: as of mid-2025, the major LLM crawlers do not fetch it. Flavio Longato’s server-log audit, Ahrefs’ commentary, and SE Ranking’s November 2025 study all found negligible citation impact, and John Mueller publicly compared it to the long-discredited keywords meta tag. What does matter is your robots.txt: make sure GPTBot, ClaudeBot, PerplexityBot, OAI-SearchBot, and Google-Extended are explicitly allowed. Those are honored, and blocking them silently costs visibility.

Why Google Search Console alone leaves you blind

Most brands still measure visibility through Google Search Console: clicks, impressions, queries from Google search. That’s a complete view of one channel and a non-existent view of every other one. GSC sees nothing that happens inside ChatGPT, Perplexity, Claude, or even much of what Gemini does outside of classic Google web search.

Ahrefs documented an instructive example: a single Lifehack article that had effectively no GSC clicks was cited by ChatGPT roughly 1,400 times. 80% of LLM-cited URLs don’t rank in Google’s top 100. A page can have zero traditional search visibility and still be one of the most-cited sources in your category on AI — or vice versa. We’d call this the “GSC-to-AI gap”: pages that rank on Google but are invisible in AI, and pages cited by AI that don’t rank on Google. Both are blind spots that compound over time if you don’t see them.

What to do this quarter, by role

If you read nothing else, here’s the per-role short list. None of these require a six-figure budget; all of them assume you already know your top 5–10 commercial pages.

If you're a… | Do these three things first |

|---|---|

SMB owner | 1. Audit your directory presence — Yelp, BBB, TripAdvisor, Foursquare, and at least two industry-specific directories. 2. Refresh your top 5 commercial pages this quarter with visible "last updated" dates and quantified claims. 3. Confirm your robots.txt allows GPTBot, ClaudeBot, PerplexityBot, and Google-Extended. |

In-house SEO / marketer | 1. Add per-platform AI citation tracking alongside GSC — at minimum ChatGPT and Perplexity, ideally Claude and Gemini too. 2. Run a citation gap analysis vs. your top three competitors on each platform. 3. Convert your top 5 commercial pages to BLUF structure with quantified, sourceable claims. |

Agency | 1. Add per-platform AI visibility scores to client reports alongside Google rankings. 2. Track the mention-vs-citation split on ChatGPT specifically — they're not the same metric. 3. Build a quarterly competitor citation share report. Only 33% of queries return the same brand list across ChatGPT, Google AI Mode, and AI Overviews — that's where the differentiation argument lives. |

Table 4. Action items mapped by role and ordered by impact-per-effort.

The cost of being invisible compounds

The visibility surface is fragmenting. Five years ago, ranking on Google was effectively the same as being findable. In 2026, your brand can be cited 1,400 times by ChatGPT and have zero GSC clicks, or rank #1 in Google and be invisible on Perplexity. AI referral traffic grew 527% year over year (Search Engine Land); AI-driven retail traffic in the 2025 holiday season was up 693% per Adobe; revenue per AI-referred visit climbed 254% year over year. The early-cited domains compound trust. The late ones spend the rest of the decade trying to catch up.

QuickSEO was built for exactly this gap: it combines Google Search Console analytics with brand visibility tracking across ChatGPT, Claude, Gemini, and Perplexity in one dashboard, so you can see where your traditional rankings and your AI citations agree, where they diverge, and where you’re bleeding visibility you didn’t know you had. See how it works at quickseo.ai.

Notes on the data

Several figures cited above carry uncertainty worth flagging. Perplexity’s monthly active user count is reported between 22M and 100M depending on the source; we used the mid-range. OpenAI’s 2027–2030 ad revenue figures are projections shared with investors, not realized revenue. Conversion rates from Seer Interactive are sample-tilted toward research-heavy verticals; the Schulze–Kaiser study found different results in transactional retail. Citation behavior shifts quickly — Reddit’s share on Perplexity dropped 86% within weeks of the October 2025 lawsuit — so anything cited as “current” in this post is timestamped to the studies referenced, not a permanent state.

Try it yourself

Related free tools

Free SEO tools related to the topics covered in this article.

Claude SEO Rank Tracker

Check if Claude AI mentions your website. See your AI visibility score, sentiment, and competitors.

ChatGPT SEO Rank Tracker

Check if ChatGPT mentions your website. See your AI visibility score, sentiment, and competitors.

Gemini SEO Rank Tracker

Check if Google Gemini mentions your website. See your AI visibility score, sentiment, and competitors.

Keep reading

Related posts

More articles on the same topics, prioritized by shared tags and keyword overlap.

ChatGPT vs Google Search in 2026: Market Share, User Data & What It Means for SEO

A data-saturated breakdown of where the two largest discovery channels actually stand right now — and what every SMB, agency, and SEO needs to do about it.

Best SEO Strategies for AI Visibility Tools: The Complete 2026 Playbook

Discover the best SEO strategies for AI visibility tools in 2026. Learn how to optimize for ChatGPT, Google AI Overviews, Perplexity, and Claude with actionable tactics including Answer Engine Optimization, entity-based authority, platform-specific strategies, and proven measurement frameworks.